LLM performance comparison tool - AI tools

-

BenchLLM The best way to evaluate LLM-powered apps

BenchLLM The best way to evaluate LLM-powered appsBenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

- Other

-

TheFastest.ai Reliable performance measurements for popular LLM models.

TheFastest.ai Reliable performance measurements for popular LLM models.TheFastest.ai provides reliable, daily updated performance benchmarks for popular Large Language Models (LLMs), measuring Time To First Token (TTFT) and Tokens Per Second (TPS) across different regions and prompt types.

- Free

-

LLM Explorer Discover and Compare Open-Source Language Models

LLM Explorer Discover and Compare Open-Source Language ModelsLLM Explorer is a comprehensive platform for discovering, comparing, and accessing over 46,000 open-source Large Language Models (LLMs) and Small Language Models (SLMs).

- Free

-

Conviction The Platform to Evaluate & Test LLMs

Conviction The Platform to Evaluate & Test LLMsConviction is an AI platform designed for evaluating, testing, and monitoring Large Language Models (LLMs) to help developers build reliable AI applications faster. It focuses on detecting hallucinations, optimizing prompts, and ensuring security.

- Freemium

- From 249$

-

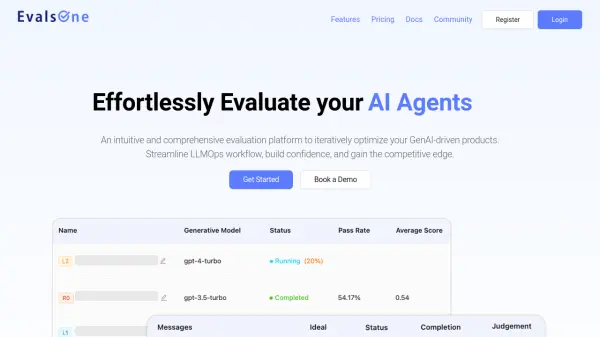

EvalsOne Evaluate LLMs & RAG Pipelines Quickly

EvalsOne Evaluate LLMs & RAG Pipelines QuicklyEvalsOne is a platform for rapidly evaluating Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) pipelines using various metrics.

- Freemium

- From 19$

-

ModelBench No-Code LLM Evaluations

ModelBench No-Code LLM EvaluationsModelBench enables teams to rapidly deploy AI solutions with no-code LLM evaluations. It allows users to compare over 180 models, design and benchmark prompts, and trace LLM runs, accelerating AI development.

- Free Trial

- From 49$

-

neutrino AI Multi-model AI Infrastructure for Optimal LLM Performance

neutrino AI Multi-model AI Infrastructure for Optimal LLM PerformanceNeutrino AI provides multi-model AI infrastructure to optimize Large Language Model (LLM) performance for applications. It offers tools for evaluation, intelligent routing, and observability to enhance quality, manage costs, and ensure scalability.

- Usage Based

-

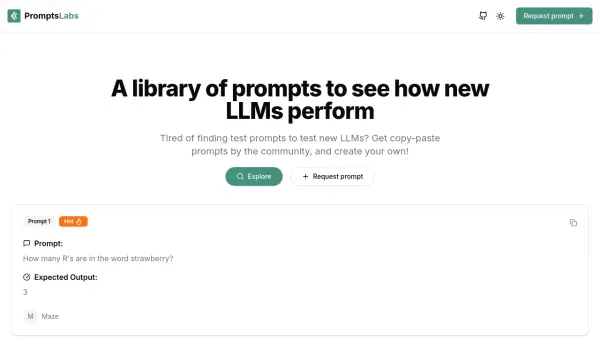

PromptsLabs A Library of Prompts for Testing LLMs

PromptsLabs A Library of Prompts for Testing LLMsPromptsLabs is a community-driven platform providing copy-paste prompts to test the performance of new LLMs. Explore and contribute to a growing collection of prompts.

- Free

-

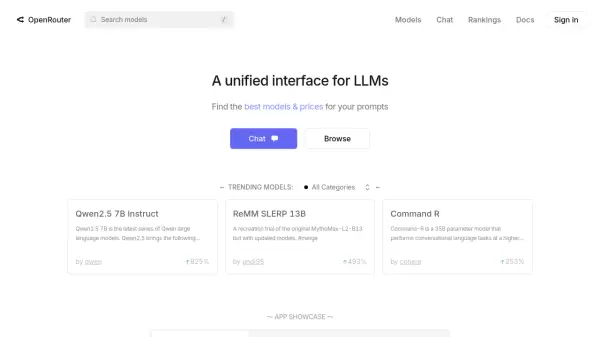

OpenRouter A unified interface for LLMs

OpenRouter A unified interface for LLMsOpenRouter provides a unified interface for accessing and comparing various Large Language Models (LLMs), offering users the ability to find optimal models and pricing for their specific prompts.

- Usage Based

-

Compare AI Models AI Model Comparison Tool

Compare AI Models AI Model Comparison ToolCompare AI Models is a platform providing comprehensive comparisons and insights into various large language models, including GPT-4o, Claude, Llama, and Mistral.

- Freemium

-

Intura Compare, Choose, and Save on AI & LLMs

Intura Compare, Choose, and Save on AI & LLMsIntura helps businesses experiment with, compare, and deploy AI and LLM models side-by-side to optimize performance and cost before full-scale implementation.

- Freemium

-

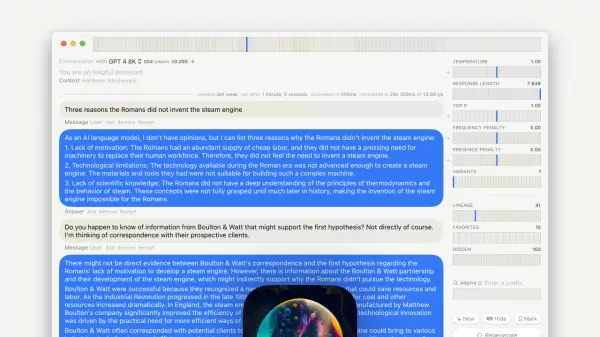

GPT–LLM Playground Your Comprehensive Testing Environment for Language Learning Models

GPT–LLM Playground Your Comprehensive Testing Environment for Language Learning ModelsGPT-LLM Playground is a macOS application designed for advanced experimentation and testing with Language Learning Models (LLMs). It offers features like multi-model support, versioning, and custom endpoints.

- Free

-

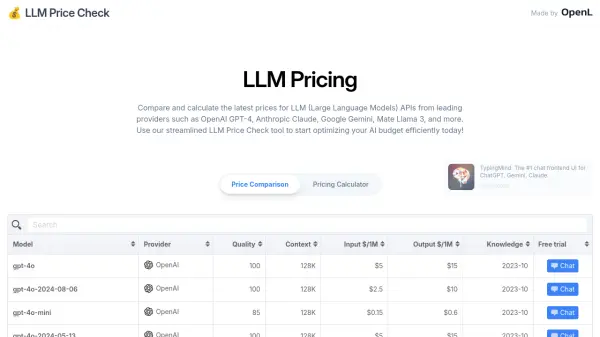

LLM Price Check Compare LLM Prices Instantly

LLM Price Check Compare LLM Prices InstantlyLLM Price Check allows users to compare and calculate prices for Large Language Model (LLM) APIs from providers like OpenAI, Anthropic, Google, and more. Optimize your AI budget efficiently.

- Free

-

MIOSN Stop overthinking LLMs. Find the optimal model at the lowest cost.

MIOSN Stop overthinking LLMs. Find the optimal model at the lowest cost.MIOSN helps users find the most suitable and cost-effective Large Language Model (LLM) for their specific tasks by analyzing and comparing different models.

- Free

-

Reva Use the right LLM for your task

Reva Use the right LLM for your taskReva helps businesses test AI configurations and compare LLM outcomes to ensure optimal performance for their specific tasks, focusing on outcome-driven AI testing and model evaluation.

- Contact for Pricing

-

LLM Optimize Rank Higher in AI Engines Recommendations

LLM Optimize Rank Higher in AI Engines RecommendationsLLM Optimize provides professional website audits to help you rank higher in LLMs like ChatGPT and Google's AI Overview, outranking competitors with tailored, actionable recommendations.

- Paid

-

Superpipe The OSS experimentation platform for LLM pipelines

Superpipe The OSS experimentation platform for LLM pipelinesSuperpipe is an open-source experimentation platform designed for building, evaluating, and optimizing Large Language Model (LLM) pipelines to improve accuracy and minimize costs. It allows deployment on user infrastructure for enhanced privacy and security.

- Free

-

Libretto LLM Monitoring, Testing, and Optimization

Libretto LLM Monitoring, Testing, and OptimizationLibretto offers comprehensive LLM monitoring, automated prompt testing, and optimization tools to ensure the reliability and performance of your AI applications.

- Freemium

- From 180$

-

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-click

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-clickLangWatch empowers AI teams to ship 10x faster with quality assurance at every step. It provides tools to measure, maximize, and easily collaborate on LLM performance.

- Paid

- From 59$

-

Gentrace Intuitive evals for intelligent applications

Gentrace Intuitive evals for intelligent applicationsGentrace is an LLM evaluation platform designed for AI teams to test and automate evaluations of generative AI products and agents. It facilitates collaborative development and ensures high-quality LLM applications.

- Usage Based

-

OneLLM Fine-tune, evaluate, and deploy your next LLM without code.

OneLLM Fine-tune, evaluate, and deploy your next LLM without code.OneLLM is a no-code platform enabling users to fine-tune, evaluate, and deploy Large Language Models (LLMs) efficiently. Streamline LLM development by creating datasets, integrating API keys, running fine-tuning processes, and comparing model performance.

- Freemium

- From 19$

-

Laminar The AI engineering platform for LLM products

Laminar The AI engineering platform for LLM productsLaminar is an open-source platform that enables developers to trace, evaluate, label, and analyze Large Language Model (LLM) applications with minimal code integration.

- Freemium

- From 25$

-

Petals Run large language models at home, BitTorrent‑style.

Petals Run large language models at home, BitTorrent‑style.Petals enables users to run large language models like Llama 3.1 and Mixtral collaboratively, distributing the load across a network of consumer-grade GPUs.

- Free

-

LLMLingua Series Effectively Deliver Information to LLMs via Prompt Compression

LLMLingua Series Effectively Deliver Information to LLMs via Prompt CompressionLLMLingua Series offers prompt compression techniques to accelerate Large Language Model (LLM) inference, reduce costs, and enhance performance, especially in long context scenarios.

- Other

Explore More

-

Sora AI videos 12 tools

-

Free beat maker AI 36 tools

-

Sales call preparation software 60 tools

-

Save money with AI shopping 30 tools

-

PDF AI analysis tool 58 tools

-

AI calendar assistant app 20 tools

-

Compress PDF tool 12 tools

-

AI content creation for real estate 13 tools

-

SEO optimized video content creation 46 tools

Didn't find tool you were looking for?