Openlayer - Alternatives & Competitors

The evaluation workspace for machine learning

Openlayer provides a secure, SOC 2 Type 2 compliant platform for testing, evaluation, and observability of machine learning models. With its seamless, 60-second onboarding and commit-style versioning, it makes data-driven ML evaluation painless and effective.

Ranked by Relevance

-

1

OpenLIT Open Source Platform for AI Engineering

OpenLIT Open Source Platform for AI EngineeringOpenLIT is an open-source observability platform designed to streamline AI development workflows, particularly for Generative AI and LLMs, offering features like prompt management, performance tracking, and secure secrets management.

- Other

-

2

MLflow ML and GenAI made simple

MLflow ML and GenAI made simpleMLflow is an open-source, end-to-end MLOps platform for building better models and generative AI apps. It simplifies complex ML and generative AI projects, offering comprehensive management from development to production.

- Free

-

3

Humanloop The LLM evals platform for enterprises to ship and scale AI with confidence

Humanloop The LLM evals platform for enterprises to ship and scale AI with confidenceHumanloop is an enterprise-grade platform that provides tools for LLM evaluation, prompt management, and AI observability, enabling teams to develop, evaluate, and deploy trustworthy AI applications.

- Freemium

-

4

Freeplay The All-in-One Platform for AI Experimentation, Evaluation, and Observability

Freeplay The All-in-One Platform for AI Experimentation, Evaluation, and ObservabilityFreeplay provides comprehensive tools for AI teams to run experiments, evaluate model performance, and monitor production, streamlining the development process.

- Paid

- From 500$

-

5

AI Studio The Executive Layer of your ML Environment

AI Studio The Executive Layer of your ML EnvironmentAI Studio is a comprehensive MLOps platform that provides enterprise-level tools for machine learning governance, monitoring, and deployment. It enables companies to streamline their ML operations with real-time insights and automated workflows.

- Freemium

-

6

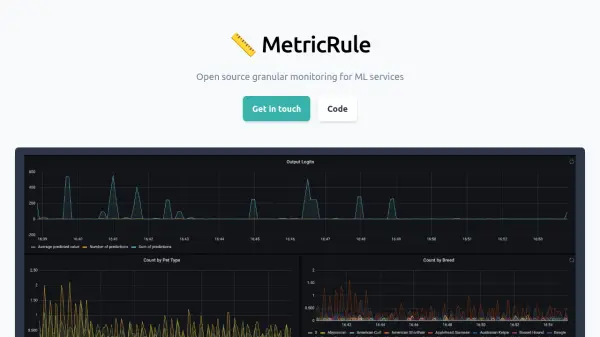

MetricRule Open source granular monitoring for ML services

MetricRule Open source granular monitoring for ML servicesMetricRule is an open-source tool offering granular monitoring for machine learning models in production, tracking performance, feature drifts, and data anomalies.

- Free

-

7

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & Evaluation

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & EvaluationAutoblocks is a collaborative testing and evaluation platform for LLM-based products that automatically improves through user and expert feedback, offering comprehensive tools for monitoring, debugging, and quality assurance.

- Freemium

- From 1750$

-

8

Nyckel Build ML models without a PhD

Nyckel Build ML models without a PhDNyckel is a platform that enables rapid development and deployment of custom machine learning models with high accuracy and security, requiring no advanced ML expertise.

- Contact for Pricing

-

9

Experience Layer Onboard new users better, wherever they are

Experience Layer Onboard new users better, wherever they areExperience Layer is an AI-powered user onboarding platform that helps businesses guide users through workflows, promote feature adoption, and share release notes to improve product experience and reduce support requests.

- Freemium

- From 99$

-

10

EchoLayer Resolve security vulnerabilities at the speed of light

EchoLayer Resolve security vulnerabilities at the speed of lightEchoLayer is an AI-powered security attribution platform that helps engineering teams quickly identify and resolve code vulnerabilities by finding the most qualified people with relevant context.

- Contact for Pricing

Didn't find tool you were looking for?