Gentrace - Alternatives & Competitors

Intuitive evals for intelligent applications

Gentrace is an LLM evaluation platform designed for AI teams to test and automate evaluations of generative AI products and agents. It facilitates collaborative development and ensures high-quality LLM applications.

Ranked by Relevance

-

1

BenchLLM The best way to evaluate LLM-powered apps

BenchLLM The best way to evaluate LLM-powered appsBenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

- Other

-

2

Braintrust The end-to-end platform for building world-class AI apps.

Braintrust The end-to-end platform for building world-class AI apps.Braintrust provides an end-to-end platform for developing, evaluating, and monitoring Large Language Model (LLM) applications. It helps teams build robust AI products through iterative workflows and real-time analysis.

- Freemium

- From 249$

-

3

Agenta End-to-End LLM Engineering Platform

Agenta End-to-End LLM Engineering PlatformAgenta is an LLM engineering platform offering tools for prompt engineering, versioning, evaluation, and observability in a single, collaborative environment.

- Freemium

- From 49$

-

4

Humanloop The LLM evals platform for enterprises to ship and scale AI with confidence

Humanloop The LLM evals platform for enterprises to ship and scale AI with confidenceHumanloop is an enterprise-grade platform that provides tools for LLM evaluation, prompt management, and AI observability, enabling teams to develop, evaluate, and deploy trustworthy AI applications.

- Freemium

-

5

Laminar The AI engineering platform for LLM products

Laminar The AI engineering platform for LLM productsLaminar is an open-source platform that enables developers to trace, evaluate, label, and analyze Large Language Model (LLM) applications with minimal code integration.

- Freemium

- From 25$

-

6

Langfuse Open Source LLM Engineering Platform

Langfuse Open Source LLM Engineering PlatformLangfuse provides an open-source platform for tracing, evaluating, and managing prompts to debug and improve LLM applications.

- Freemium

- From 59$

-

7

Langtrace Transform AI Prototypes into Enterprise-Grade Products

Langtrace Transform AI Prototypes into Enterprise-Grade ProductsLangtrace is an open-source observability and evaluations platform designed to help developers monitor, evaluate, and enhance AI agents for enterprise deployment.

- Freemium

- From 31$

-

8

OpenLIT Open Source Platform for AI Engineering

OpenLIT Open Source Platform for AI EngineeringOpenLIT is an open-source observability platform designed to streamline AI development workflows, particularly for Generative AI and LLMs, offering features like prompt management, performance tracking, and secure secrets management.

- Other

-

9

Langtail The low-code platform for testing AI apps

Langtail The low-code platform for testing AI appsLangtail is a comprehensive testing platform that enables teams to test and debug LLM-powered applications with a spreadsheet-like interface, offering security features and integration with major LLM providers.

- Freemium

- From 99$

-

10

Hegel AI Developer Platform for Large Language Model (LLM) Applications

Hegel AI Developer Platform for Large Language Model (LLM) ApplicationsHegel AI provides a developer platform for building, monitoring, and improving large language model (LLM) applications, featuring tools for experimentation, evaluation, and feedback integration.

- Contact for Pricing

-

11

ModelBench No-Code LLM Evaluations

ModelBench No-Code LLM EvaluationsModelBench enables teams to rapidly deploy AI solutions with no-code LLM evaluations. It allows users to compare over 180 models, design and benchmark prompts, and trace LLM runs, accelerating AI development.

- Free Trial

- From 49$

-

12

Adaline Ship reliable AI faster

Adaline Ship reliable AI fasterAdaline is a collaborative platform for teams building with Large Language Models (LLMs), enabling efficient iteration, evaluation, deployment, and monitoring of prompts.

- Contact for Pricing

-

13

EvalsOne Evaluate LLMs & RAG Pipelines Quickly

EvalsOne Evaluate LLMs & RAG Pipelines QuicklyEvalsOne is a platform for rapidly evaluating Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) pipelines using various metrics.

- Freemium

- From 19$

-

14

Conviction The Platform to Evaluate & Test LLMs

Conviction The Platform to Evaluate & Test LLMsConviction is an AI platform designed for evaluating, testing, and monitoring Large Language Models (LLMs) to help developers build reliable AI applications faster. It focuses on detecting hallucinations, optimizing prompts, and ensuring security.

- Freemium

- From 249$

-

15

PromptsLabs A Library of Prompts for Testing LLMs

PromptsLabs A Library of Prompts for Testing LLMsPromptsLabs is a community-driven platform providing copy-paste prompts to test the performance of new LLMs. Explore and contribute to a growing collection of prompts.

- Free

-

16

MLflow ML and GenAI made simple

MLflow ML and GenAI made simpleMLflow is an open-source, end-to-end MLOps platform for building better models and generative AI apps. It simplifies complex ML and generative AI projects, offering comprehensive management from development to production.

- Free

-

17

Parea Test and Evaluate your AI systems

Parea Test and Evaluate your AI systemsParea is a platform for testing, evaluating, and monitoring Large Language Model (LLM) applications, helping teams track experiments, collect human feedback, and deploy prompts confidently.

- Freemium

- From 150$

-

18

NeuralTrust Secure, test, & scale LLMs

NeuralTrust Secure, test, & scale LLMsNeuralTrust offers a unified platform for securing, testing, monitoring, and scaling Large Language Model (LLM) applications, ensuring robust security, regulatory compliance, and operational control for enterprises.

- Contact for Pricing

-

19

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & Evaluation

Autoblocks Improve your LLM Product Accuracy with Expert-Driven Testing & EvaluationAutoblocks is a collaborative testing and evaluation platform for LLM-based products that automatically improves through user and expert feedback, offering comprehensive tools for monitoring, debugging, and quality assurance.

- Freemium

- From 1750$

-

20

Freeplay The All-in-One Platform for AI Experimentation, Evaluation, and Observability

Freeplay The All-in-One Platform for AI Experimentation, Evaluation, and ObservabilityFreeplay provides comprehensive tools for AI teams to run experiments, evaluate model performance, and monitor production, streamlining the development process.

- Paid

- From 500$

-

21

Helicone Ship your AI app with confidence

Helicone Ship your AI app with confidenceHelicone is an all-in-one platform for monitoring, debugging, and improving production-ready LLM applications. It provides tools for logging, evaluating, experimenting, and deploying AI applications.

- Freemium

- From 20$

-

22

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-click

LangWatch Monitor, Evaluate & Optimize your LLM performance with 1-clickLangWatch empowers AI teams to ship 10x faster with quality assurance at every step. It provides tools to measure, maximize, and easily collaborate on LLM performance.

- Paid

- From 59$

-

23

Promptech The AI teamspace to streamline your workflows

Promptech The AI teamspace to streamline your workflowsPromptech is a collaborative AI platform that provides prompt engineering tools and teamspace solutions for organizations to effectively utilize Large Language Models (LLMs). It offers access to multiple AI models, workspace management, and enterprise-ready features.

- Paid

- From 20$

-

24

Literal AI Ship reliable LLM Products

Literal AI Ship reliable LLM ProductsLiteral AI streamlines the development of LLM applications, offering tools for evaluation, prompt management, logging, monitoring, and more to build production-grade AI products.

- Freemium

-

25

phoenix.arize.com Open-source LLM tracing and evaluation

phoenix.arize.com Open-source LLM tracing and evaluationPhoenix accelerates AI development with powerful insights, allowing seamless evaluation, experimentation, and optimization of AI applications in real time.

- Freemium

-

26

Keywords AI LLM monitoring for AI startups

Keywords AI LLM monitoring for AI startupsKeywords AI is a comprehensive developer platform for LLM applications, offering monitoring, debugging, and deployment tools. It serves as a Datadog-like solution specifically designed for LLM applications.

- Freemium

- From 7$

-

27

klu.ai Next-gen LLM App Platform for Confident AI Development

klu.ai Next-gen LLM App Platform for Confident AI DevelopmentKlu is an all-in-one LLM App Platform that enables teams to experiment, version, and fine-tune GPT-4 Apps with collaborative prompt engineering and comprehensive evaluation tools.

- Freemium

- From 30$

-

28

Ottic QA for LLM products done right

Ottic QA for LLM products done rightOttic empowers tech and non-technical teams to test LLM applications, ensuring faster product development and enhanced reliability. Streamline your QA process and gain full visibility into your LLM application's behavior.

- Contact for Pricing

-

29

Arize Unified Observability and Evaluation Platform for AI

Arize Unified Observability and Evaluation Platform for AIArize is a comprehensive platform designed to accelerate the development and improve the production of AI applications and agents.

- Freemium

- From 50$

-

30

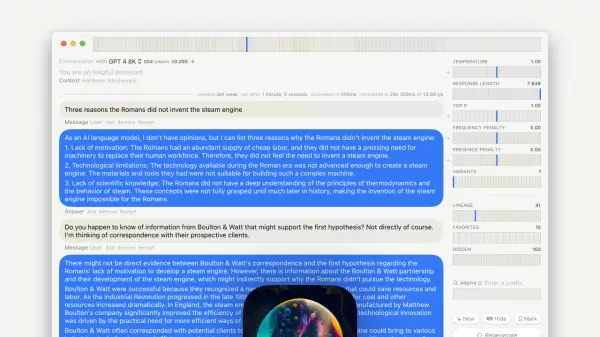

GPT–LLM Playground Your Comprehensive Testing Environment for Language Learning Models

GPT–LLM Playground Your Comprehensive Testing Environment for Language Learning ModelsGPT-LLM Playground is a macOS application designed for advanced experimentation and testing with Language Learning Models (LLMs). It offers features like multi-model support, versioning, and custom endpoints.

- Free

-

31

Transformer Lab The Open Source Platform for Training Advanced AI Models

Transformer Lab The Open Source Platform for Training Advanced AI ModelsTransformer Lab is an open-source platform enabling researchers, ML engineers, and developers to collaboratively build, train, evaluate, and deploy AI models with features like provenance, reproducibility, and transparency.

- Free

-

32

Promptotype The platform for structured prompt engineering

Promptotype The platform for structured prompt engineeringPromptotype is a platform designed for structured prompt engineering, enabling users to develop, test, and monitor LLM tasks efficiently.

- Freemium

- From 6$

-

33

Scorecard.io Testing for production-ready LLM applications, RAG systems, Agents, Chatbots.

Scorecard.io Testing for production-ready LLM applications, RAG systems, Agents, Chatbots.Scorecard.io is an evaluation platform designed for testing and validating production-ready Generative AI applications, including LLMs, RAG systems, agents, and chatbots. It supports the entire AI production lifecycle from experiment design to continuous evaluation.

- Contact for Pricing

-

34

Libretto LLM Monitoring, Testing, and Optimization

Libretto LLM Monitoring, Testing, and OptimizationLibretto offers comprehensive LLM monitoring, automated prompt testing, and optimization tools to ensure the reliability and performance of your AI applications.

- Freemium

- From 180$

-

35

LLM Pulse Track your brand's presence across AI search effortlessly

LLM Pulse Track your brand's presence across AI search effortlesslyLLM Pulse is a real-time brand monitoring platform that tracks and analyzes your brand's visibility across major Large Language Models like ChatGPT and Google AI, helping businesses understand and improve their presence in AI-generated content.

- Paid

- From 49$

-

36

Reprompt Collaborative prompt testing for confident AI deployment

Reprompt Collaborative prompt testing for confident AI deploymentReprompt is a developer-focused platform that enables efficient testing and optimization of AI prompts with real-time analysis and comparison capabilities.

- Usage Based

-

37

Rhesis AI Open-source test generation SDK for LLM applications

Rhesis AI Open-source test generation SDK for LLM applicationsRhesis AI offers an open-source SDK to generate comprehensive, context-specific test sets for LLM applications, enhancing AI evaluation, reliability, and compliance.

- Freemium

-

38

Requesty Develop, Deploy, and Monitor AI with Confidence

Requesty Develop, Deploy, and Monitor AI with ConfidenceRequesty is a platform for faster AI development, deployment, and monitoring. It provides tools for refining LLM applications, analyzing conversational data, and extracting actionable insights.

- Usage Based

-

39

LLM Explorer Discover and Compare Open-Source Language Models

LLM Explorer Discover and Compare Open-Source Language ModelsLLM Explorer is a comprehensive platform for discovering, comparing, and accessing over 46,000 open-source Large Language Models (LLMs) and Small Language Models (SLMs).

- Free

-

40

Intura Compare, Choose, and Save on AI & LLMs

Intura Compare, Choose, and Save on AI & LLMsIntura helps businesses experiment with, compare, and deploy AI and LLM models side-by-side to optimize performance and cost before full-scale implementation.

- Freemium

-

41

Compare AI Models AI Model Comparison Tool

Compare AI Models AI Model Comparison ToolCompare AI Models is a platform providing comprehensive comparisons and insights into various large language models, including GPT-4o, Claude, Llama, and Mistral.

- Freemium

-

42

Autumn8 Compliance Assessment for Gen AI Applications

Autumn8 Compliance Assessment for Gen AI ApplicationsAutumn8 streamlines enterprise compliance assessments for Gen AI applications, significantly reducing preparation time and improving acceptance rates through automated document analysis.

- Contact for Pricing

-

43

OneLLM Fine-tune, evaluate, and deploy your next LLM without code.

OneLLM Fine-tune, evaluate, and deploy your next LLM without code.OneLLM is a no-code platform enabling users to fine-tune, evaluate, and deploy Large Language Models (LLMs) efficiently. Streamline LLM development by creating datasets, integrating API keys, running fine-tuning processes, and comparing model performance.

- Freemium

- From 19$

-

44

Kosmoy Accelerate GenAI Adoption with Robust Governance and Monitoring

Kosmoy Accelerate GenAI Adoption with Robust Governance and MonitoringKosmoy is a platform for controlling, building, and monitoring enterprise AI applications at scale, providing governance, observability, and security features. It centralizes LLM interactions, tracks costs and user feedback, and ensures compliance.

- Freemium

-

45

neutrino AI Multi-model AI Infrastructure for Optimal LLM Performance

neutrino AI Multi-model AI Infrastructure for Optimal LLM PerformanceNeutrino AI provides multi-model AI infrastructure to optimize Large Language Model (LLM) performance for applications. It offers tools for evaluation, intelligent routing, and observability to enhance quality, manage costs, and ensure scalability.

- Usage Based

-

46

SysPrompt The collaborative prompt CMS for LLM engineers

SysPrompt The collaborative prompt CMS for LLM engineersSysPrompt is a collaborative Content Management System (CMS) designed for LLM engineers to manage, version, and collaborate on prompts, facilitating faster development of better LLM applications.

- Paid

-

47

Unify Build AI Your Way

Unify Build AI Your WayUnify provides tools to build, test, and optimize LLM pipelines with custom interfaces and a unified API for accessing all models across providers.

- Freemium

- From 40$

-

48

TruEra AI Observability and Diagnostics Platform

TruEra AI Observability and Diagnostics PlatformTruEra offers an AI observability and diagnostics platform to monitor and improve the quality of machine learning models. It aids in building trustworthy AI solutions across various industries.

- Contact for Pricing

-

49

LLMO Metrics Track and boost your brand presence in AI responses

LLMO Metrics Track and boost your brand presence in AI responsesLLMO Metrics tracks and optimizes your brand's visibility across major AI models like ChatGPT, Gemini, and Copilot. Monitor competitor rankings and ensure accurate brand representation in AI-generated answers.

- Free Trial

- From 87$

-

50

PromptMage A Python framework for simplified LLM-based application development

PromptMage A Python framework for simplified LLM-based application developmentPromptMage is a Python framework that streamlines the development of complex, multi-step applications powered by Large Language Models (LLMs), offering version control, testing capabilities, and automated API generation.

- Other

Didn't find tool you were looking for?