What is GeneratorLLMs?

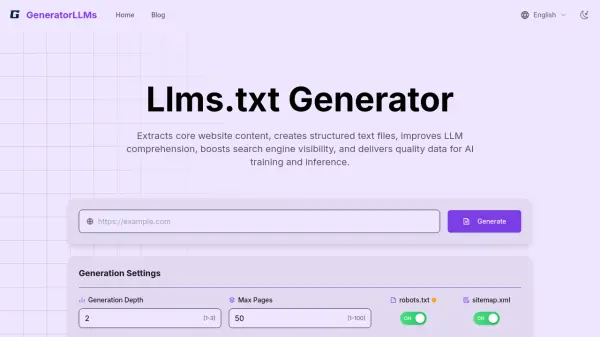

By offering high-quality, structured content in Markdown format, GeneratorLLMs helps LLMs comprehend website information more accurately during inference, potentially reducing hallucinations. This structured semantic information also assists AI-driven search engines in understanding a website's core value propositions and content structure, aiming to increase visibility in intent-oriented searches. It supports setting crawl parameters like depth and page count and respects existing standards like robots.txt.

Features

- Intelligent Website Crawler: Automatically analyzes website structure and extracts key content.

- Content Optimization Processing: Cleans HTML noise, retains core text, and presents in Markdown format.

- llms.txt Standard Compliance: Generates files adhering to the `llms.txt` standard format.

- Batch Processing Capability: Supports setting crawl depth (1-3) and maximum page count (1-100).

- Smart Link Processing: Identifies and processes internal links to build structured content relationships.

- One-Click Export and Share: Allows easy download, copy, or sharing of generated files.

- Customizable Crawling: Set crawl depth and max pages, respect robots.txt, prioritize sitemap.xml.

Use Cases

- Improving LLM understanding of website content.

- Enhancing website visibility in AI-driven search engines.

- Creating standardized data sources for AI training.

- Providing condensed website summaries for LLM inference.

- Generating structured content for RAG systems.

- Facilitating communication between websites and AI models.

FAQs

-

What is the llms.txt standard and what is it used for?

llms.txt is an emerging website standard designed to provide structured, condensed website content for large language models (LLMs). It helps models better understand and utilize website information by offering a standardized format, addressing LLM limitations in processing entire websites. -

What types of websites does the generator support?

The generator supports most publicly accessible websites, including company sites, blogs, documentation sites, e-commerce platforms, and educational resources. -

Does the generated content need manual editing?

While the automatically generated content is often sufficient, manual adjustments might be needed if you have specific content priorities or emphasis requirements. -

What crawling parameters can I set?

You can set crawl depth (1-3 levels), maximum page count (1-100), and choose whether to respect robots.txt or prioritize sitemap.xml during crawling. -

Where should I place the generated llms.txt file on my website?

The llms.txt file should be placed in the root directory of your website (e.g., example.com/llms.txt), similar to robots.txt.