PromptsLabs Uptime Monitor

A Library of Prompts for Testing LLMs

Last 30 Days Performance

Average Uptime

94.52%

Based on 30-day monitoring period

Average Response Time

117.07ms

Mean response time across all checks

Daily Status Overview

Hover for detailsHistorical Performance

Dec-2025

58.58% uptime

Monthly Uptime

58.58%

Monthly Response Time

52ms

Daily Status Breakdown

Nov-2025

0% uptime

Monthly Uptime

0%

Monthly Response Time

0ms

Daily Status Breakdown

Oct-2025

56.9% uptime

Monthly Uptime

56.9%

Monthly Response Time

195ms

Daily Status Breakdown

Sep-2025

99.68% uptime

Monthly Uptime

99.68%

Monthly Response Time

147ms

Daily Status Breakdown

Aug-2025

88% uptime

Monthly Uptime

88%

Monthly Response Time

100ms

Daily Status Breakdown

Jul-2025

64.81% uptime

Monthly Uptime

64.81%

Monthly Response Time

69ms

Daily Status Breakdown

Jun-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

126ms

Daily Status Breakdown

May-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

134ms

Daily Status Breakdown

Apr-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

140ms

Daily Status Breakdown

Related Uptime Monitors

Explore uptime status for similar tools that also have monitoring enabled.

-

Operational

OperationalPromptotype

The platform for structured prompt engineering

Promptotype is a platform designed for structured prompt engineering, enabling users to develop, test, and monitor LLM tasks efficiently.

Last checked: 1 hour ago View Status -

Issues

IssuesPrompt Hippo

Test and Optimize LLM Prompts with Science.

Prompt Hippo is an AI-powered testing suite for Large Language Model (LLM) prompts, designed to improve their robustness, reliability, and safety through side-by-side comparisons.

Last checked: 1 hour ago View Status -

Operational

OperationalModelBench

No-Code LLM Evaluations

ModelBench enables teams to rapidly deploy AI solutions with no-code LLM evaluations. It allows users to compare over 180 models, design and benchmark prompts, and trace LLM runs, accelerating AI development.

Last checked: 1 hour ago View Status -

Operational

OperationalBenchLLM

The best way to evaluate LLM-powered apps

BenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

Last checked: 6 hours ago View Status -

Operational

Operationalpromptfoo

Test & secure your LLM apps with open-source LLM testing

promptfoo is an open-source LLM testing tool designed to help developers secure and evaluate their language model applications, offering features like vulnerability scanning and continuous monitoring.

Last checked: 50 minutes ago View Status -

Operational

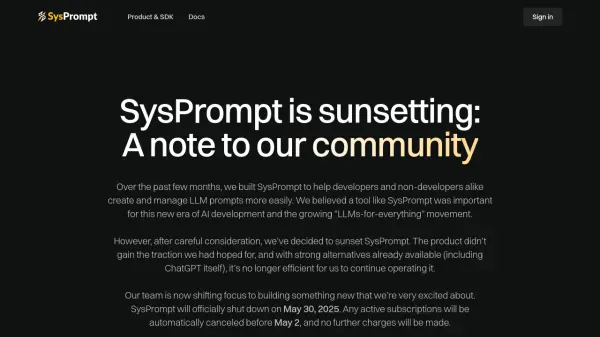

OperationalSysPrompt

The collaborative prompt CMS for LLM engineers

SysPrompt is a collaborative Content Management System (CMS) designed for LLM engineers to manage, version, and collaborate on prompts, facilitating faster development of better LLM applications.

Last checked: 1 hour ago View Status