What is Onehouse?

Onehouse delivers a universal data lakehouse platform designed for speed, openness, and cost-efficiency. Built by the original creators of Apache Hudi, it facilitates near real-time data ingestion, incremental data transformations, and automated table optimizations. This approach enhances performance, significantly reducing query times and ETL pipeline processing speeds, making it suitable for demanding workloads including database change data capture (CDC).

The platform emphasizes flexibility and interoperability, supporting major open table formats like Apache Hudi, Apache Iceberg, and Delta Lake through its open-source Apache XTable technology. This allows users to seamlessly switch between formats and access data from various engines such as Amazon Athena, Google BigQuery, Snowflake, Redshift, Databricks, and Apache Spark without migration hassles. Onehouse runs on major cloud providers (AWS, GCP, Azure coming soon) and offers managed services, including automated vector embeddings for AI applications, aiming to simplify data operations, reduce engineering overhead, and lower data warehousing costs.

Features

- Lightning-Fast Data Ingestion: Handles tough CDC workloads in near real-time with managed ELT.

- Universal Data Storage: Supports Apache Hudi, Apache Iceberg, and Delta Lake formats via Apache XTable.

- Unmatched Flexibility: Allows seamless switching between formats and engines without data migration.

- Query Anywhere: Supports various cloud-native engines and warehouses (Athena, BigQuery, Snowflake, Redshift, Databricks, EMR).

- Best-in-Class Performance: Offers 4-10x faster ELT/ETL pipelines and 2-30x faster queries via incremental processing and automatic optimizations.

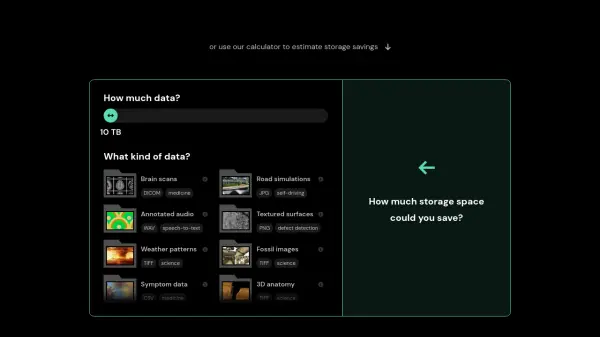

- Superior Cost-Efficiency: Reduces data warehousing costs by up to 50%+ and minimizes query scan costs.

- Managed Services (Onehouse Cloud): Provides fully managed operations, automated tuning, monitoring, and governance.

- Multi-Catalog Synchronization: Syncs data across Snowflake, Databricks, Big Query, etc., from a single pipeline.

- Open Engines: Deploy open-source compute engines (Spark) against lakehouse data.

- Automated Vector Embeddings: Generates vector embeddings from data directly within the lakehouse.

Use Cases

- Accelerating near real-time data ingestion from databases, streams, and storage.

- Optimizing data lakehouse tables (Hudi, Iceberg, Delta Lake) for faster queries.

- Reducing data warehouse costs by offloading transformations.

- Managing and scaling Apache Hudi lakehouses with enterprise support.

- Generating and managing vector embeddings for Generative AI applications.

- Enabling BI, reporting, and real-time analytics.

- Supporting Data Science and Machine Learning workflows.

- Building efficient data engineering pipelines.