LLM Explorer Uptime Monitor

Discover and Compare Open-Source Language Models

Last 30 Days Performance

Average Uptime

0%

Based on 30-day monitoring period

Average Response Time

0ms

Mean response time across all checks

Daily Status Overview

Hover for detailsHistorical Performance

Jan-2026

100% uptime

Monthly Uptime

100%

Monthly Response Time

1413ms

Daily Status Breakdown

Dec-2025

99.57% uptime

Monthly Uptime

99.57%

Monthly Response Time

1431ms

Daily Status Breakdown

Nov-2025

98.41% uptime

Monthly Uptime

98.41%

Monthly Response Time

1421ms

Daily Status Breakdown

Oct-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

1348ms

Daily Status Breakdown

Sep-2025

99.4% uptime

Monthly Uptime

99.4%

Monthly Response Time

1261ms

Daily Status Breakdown

Aug-2025

99.72% uptime

Monthly Uptime

99.72%

Monthly Response Time

1226ms

Daily Status Breakdown

Jul-2025

99.48% uptime

Monthly Uptime

99.48%

Monthly Response Time

1249ms

Daily Status Breakdown

Jun-2025

99.6% uptime

Monthly Uptime

99.6%

Monthly Response Time

1289ms

Daily Status Breakdown

Related Uptime Monitors

Explore uptime status for similar tools that also have monitoring enabled.

-

Issues

IssuesCompare AI Models

AI Model Comparison Tool

Compare AI Models is a platform providing comprehensive comparisons and insights into various large language models, including GPT-4o, Claude, Llama, and Mistral.

Last checked: 1 month ago View Status -

Operational

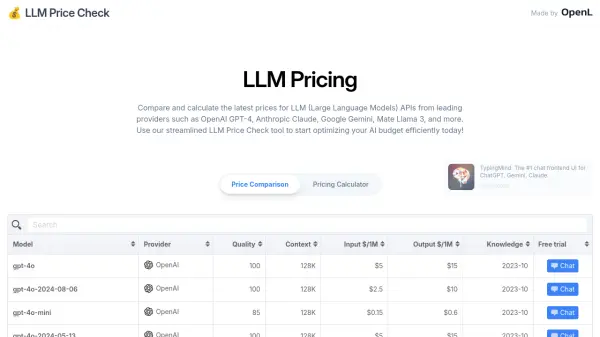

OperationalLLM Price Check

Compare LLM Prices Instantly

LLM Price Check allows users to compare and calculate prices for Large Language Model (LLM) APIs from providers like OpenAI, Anthropic, Google, and more. Optimize your AI budget efficiently.

Last checked: 1 month ago View Status -

Operational

OperationalBenchLLM

The best way to evaluate LLM-powered apps

BenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

Last checked: 1 month ago View Status -

Operational

OperationalModelBench

No-Code LLM Evaluations

ModelBench enables teams to rapidly deploy AI solutions with no-code LLM evaluations. It allows users to compare over 180 models, design and benchmark prompts, and trace LLM runs, accelerating AI development.

Last checked: 1 month ago View Status -

Operational

OperationalOpenRouter

A unified interface for LLMs

OpenRouter provides a unified interface for accessing and comparing various Large Language Models (LLMs), offering users the ability to find optimal models and pricing for their specific prompts.

Last checked: 1 month ago View Status -

Operational

OperationalLLM Pricing

A comprehensive pricing comparison tool for Large Language Models

LLM Pricing is a website that aggregates and compares pricing information for various Large Language Models (LLMs) from official AI providers and cloud service vendors.

Last checked: 1 month ago View Status