Adaptive ML Uptime Monitor

AI, Tuned to Production.

Last 30 Days Performance

Average Uptime

100%

Based on 30-day monitoring period

Average Response Time

131.67ms

Mean response time across all checks

Daily Status Overview

Hover for detailsHistorical Performance

Dec-2025

99.78% uptime

Monthly Uptime

99.78%

Monthly Response Time

220ms

Daily Status Breakdown

Nov-2025

99.86% uptime

Monthly Uptime

99.86%

Monthly Response Time

222ms

Daily Status Breakdown

Oct-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

210ms

Daily Status Breakdown

Sep-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

225ms

Daily Status Breakdown

Aug-2025

99.87% uptime

Monthly Uptime

99.87%

Monthly Response Time

465ms

Daily Status Breakdown

Jul-2025

99.87% uptime

Monthly Uptime

99.87%

Monthly Response Time

198ms

Daily Status Breakdown

Jun-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

241ms

Daily Status Breakdown

May-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

232ms

Daily Status Breakdown

Apr-2025

100% uptime

Monthly Uptime

100%

Monthly Response Time

252ms

Daily Status Breakdown

Related Uptime Monitors

Explore uptime status for similar tools that also have monitoring enabled.

-

Issues

IssuesLLM Optimize

Rank Higher in AI Engines Recommendations

LLM Optimize provides professional website audits to help you rank higher in LLMs like ChatGPT and Google's AI Overview, outranking competitors with tailored, actionable recommendations.

Last checked: 12 hours ago View Status -

Operational

OperationalReva

Use the right LLM for your task

Reva helps businesses test AI configurations and compare LLM outcomes to ensure optimal performance for their specific tasks, focusing on outcome-driven AI testing and model evaluation.

Last checked: 4 hours ago View Status -

Issues

IssuesCentML

Better, Faster, Easier AI

CentML streamlines LLM deployment, offering advanced system optimization and efficient hardware utilization. It provides single-click resource sizing, model serving, and supports diverse hardware and models.

Last checked: 16 hours ago View Status -

Operational

OperationalAdaline

Ship reliable AI faster

Adaline is a collaborative platform for teams building with Large Language Models (LLMs), enabling efficient iteration, evaluation, deployment, and monitoring of prompts.

Last checked: 4 hours ago View Status -

Operational

OperationalBenchLLM

The best way to evaluate LLM-powered apps

BenchLLM is a tool for evaluating LLM-powered applications. It allows users to build test suites, generate quality reports, and choose between automated, interactive, or custom evaluation strategies.

Last checked: 3 hours ago View Status -

Operational

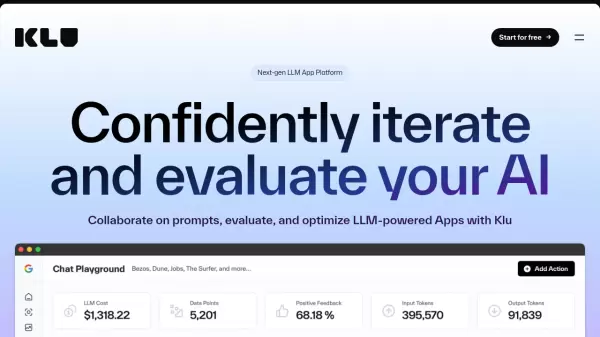

Operationalklu.ai

Next-gen LLM App Platform for Confident AI Development

Klu is an all-in-one LLM App Platform that enables teams to experiment, version, and fine-tune GPT-4 Apps with collaborative prompt engineering and comprehensive evaluation tools.

Last checked: 3 hours ago View Status